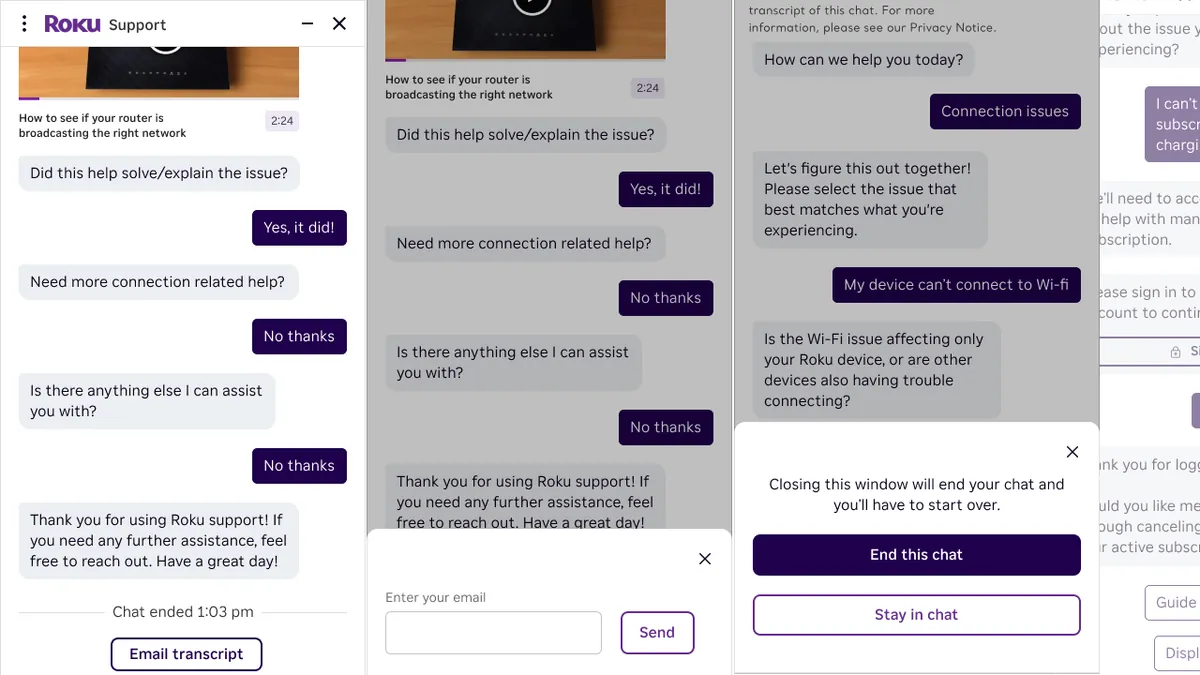

What we built

I was the technical lead on an AI chatbot for Roku's customer support, covering. The system plugs into multiple LLM providers (Claude V2, GPT-3/4) through AWS Connect to handle customer queries with natural language understanding.

Results

- 40% fewer support tickets

- 25% higher customer satisfaction scores

- 95% accuracy on automated query resolution

- Response time went from hours (human queue) to seconds

How it works

The platform pairs AWS Connect for comms infrastructure with a custom AI orchestration layer in React and Node.js:

- Multi-LLM routing picks the right model per query (Claude for nuanced reasoning, GPT for speed)

- RAG pipeline pulls from Roku's knowledge base so answers stay accurate and contextual

- Escalation to humans happens automatically for complex issues, with full conversation context passed along

- Feedback loop uses interaction data to keep improving resolution accuracy

Technical details

- React chat widget on the frontend, Node.js orchestration layer, AWS Connect for the communication backbone

- Conversation context management keeps multi-turn dialogues coherent

- Fallback strategies make sure no query goes unanswered

- Monitoring dashboards track resolution rates, escalation patterns, and per-model performance

In the press

Roku's AI transformation using the platform we built was covered by Quiq: Roku's Agentic AI CX Transformation.